The Best Resource about Binary Code

ConvertBinary offers a set of free tools, reference tables, and tutorials for binary code conversion. Learn more

Binary Code Tools

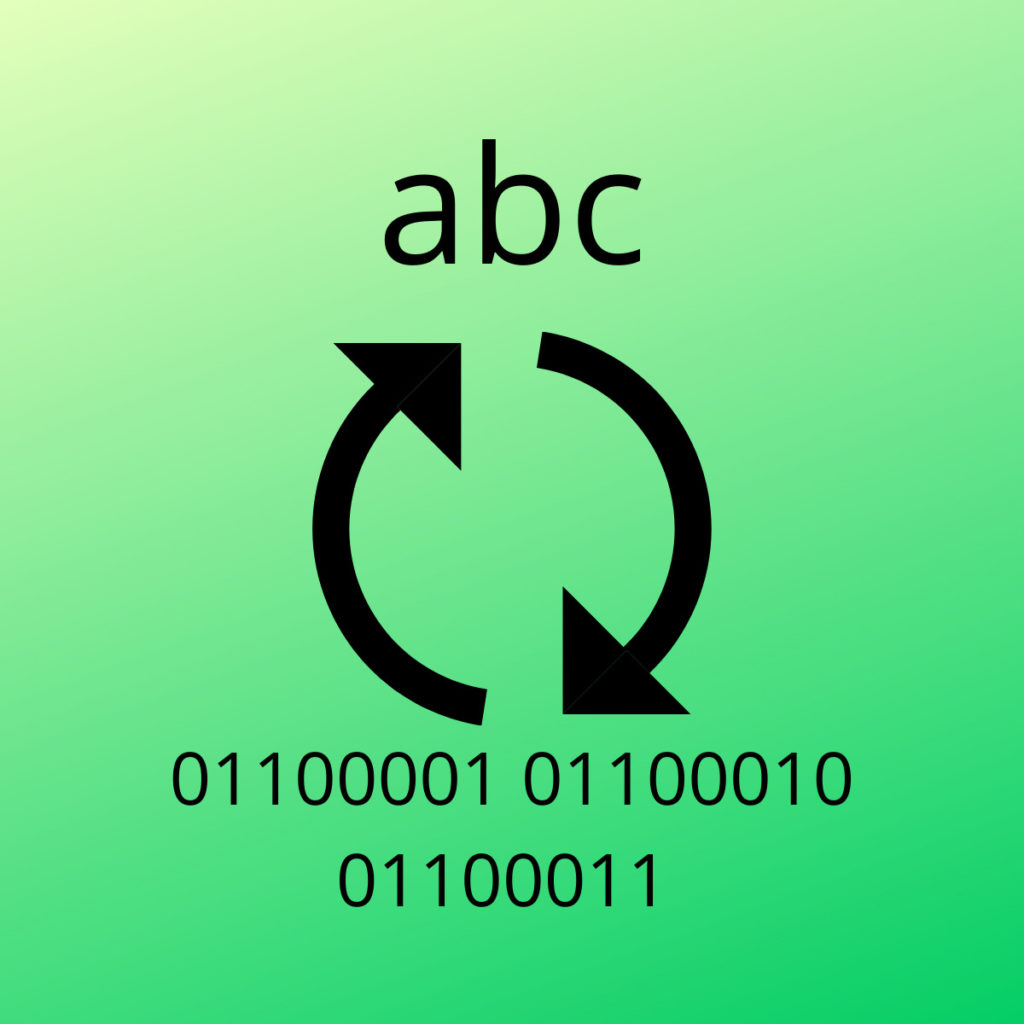

Binary Translator

Use this binary code translator when you need to convert binary code to text!

Text to Binary

Convert any text to binary code, instantly as you type: have fun encoding your messages in binary code!

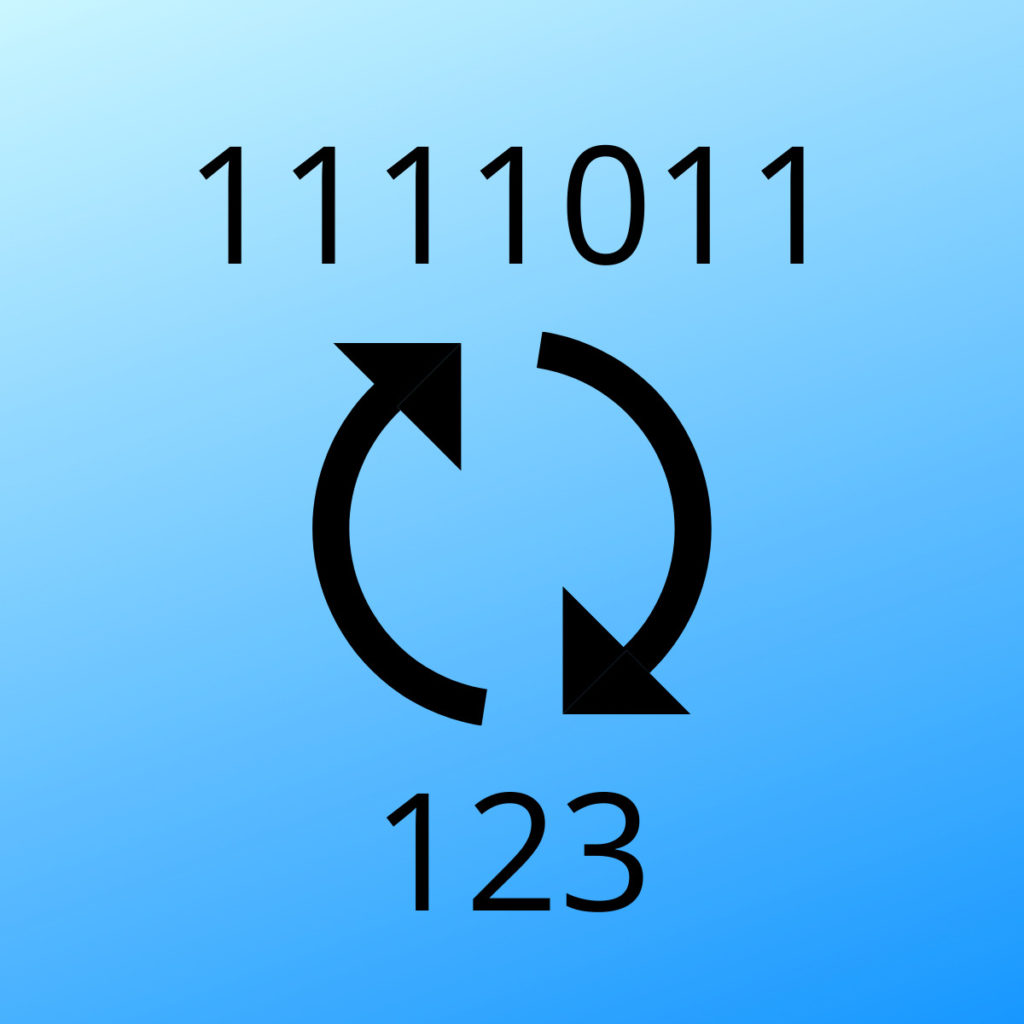

Binary to Decimal

Convert binary numbers to the decimal representation, with our free binary to decimal converter.

Decimal to Binary

Convert decimal numbers to the binary representation, with our free decimal to binary converter.

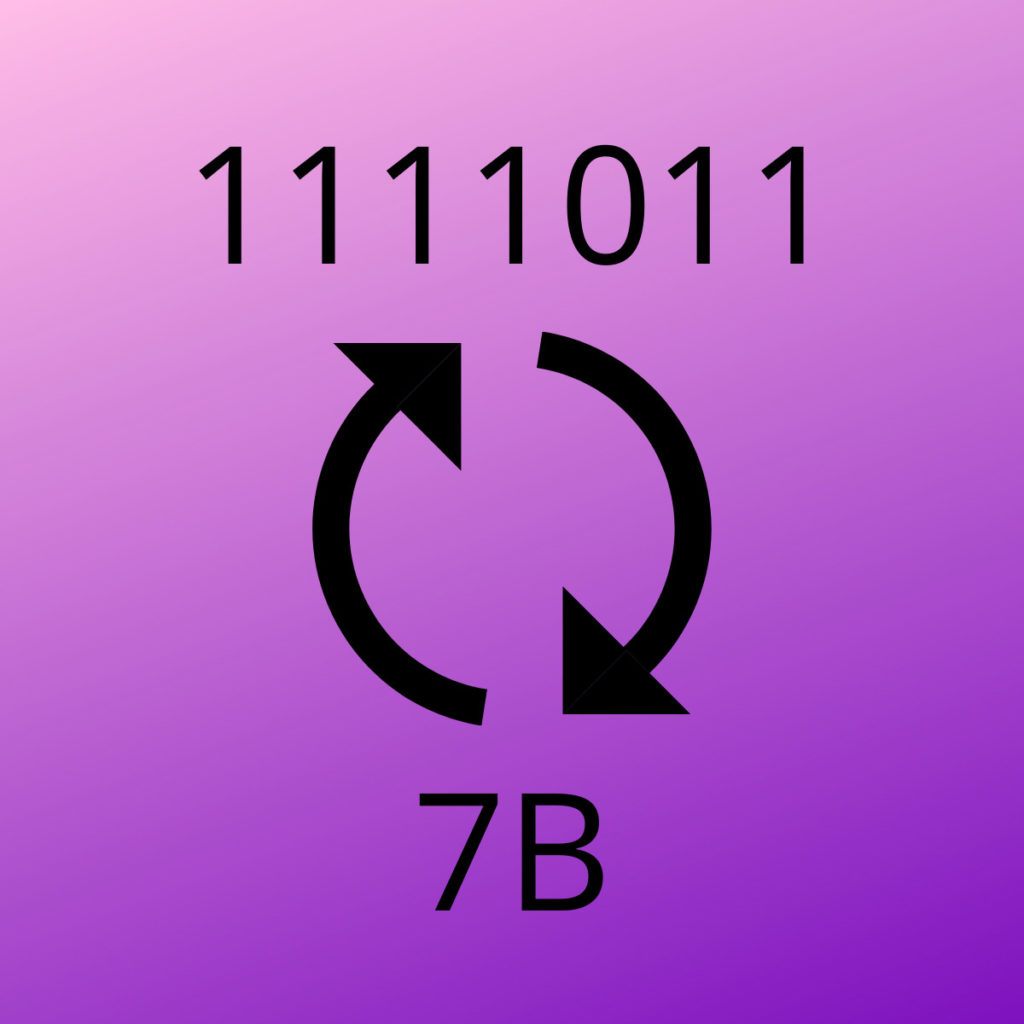

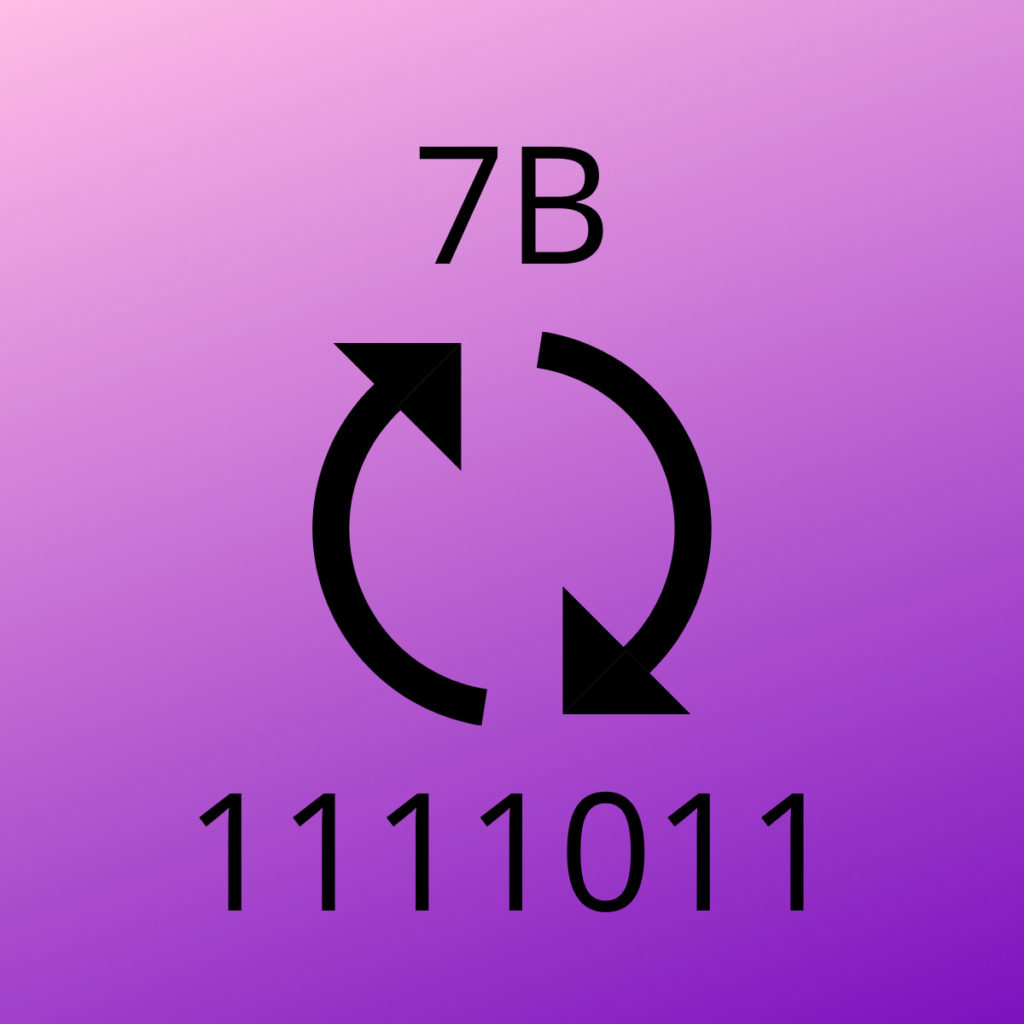

Binary to Hexadecimal

This online converter helps you to easily and quickly convert any Binary number to Hexadecimal.

Hexadecimal to Binary

This online converter helps you to easily and quickly convert any Hexadecimal number (up to 7FFFFFFFFFFFFFFF) to Binary.

Do you have questions about any of our Tools? Check out the FAQs.

Binary Code Tables

Binary Alphabet

A table containing all the letters of the latin ASCII alphabet (both Uppercase and lowercase) along with their binary code representation.

Binary Numbers

A table of the decimal numbers from 0 to 100 along with their binary code representation.

Binary Code Conversion Tutorials

How to Convert Binary to Text

Learn how to convert binary-encoded text into regular, human-readable text with this easy to read, step-by step tutorial. Visual learners will find lots of images and a video explainer.

How to Convert Text to Binary

Learn how to convert any text to binary code with this easy to read, step-by step tutorial. Visual learners will find lots of images and a video explainer.

How to Convert Decimal to Binary

Learn how to convert decimal numbers to their binary representation with this easy to read, step-by step tutorial. Visual learners will find lots of images and a video explainer.

How to Convert Binary to Decimal

Learn how to convert binary numbers to their decimal representation with this easy to read, step-by step tutorial. Visual learners will find lots of images and a video explainer.

How to Convert Hexadecimal to Binary

Learn how to convert hexadecimal numbers to their binary representation with this easy to read, step-by step tutorial. Visual learners will find lots of images and a video explainer.

How to Convert Binary to Hexadecimal

Learn how to convert binary numbers to their hexadecimal representation with this easy to read, step-by step tutorial. Visual learners will find lots of images and a video explainer.

FAQs about Binary Code

A collection of the most Frequently Asked Questions about the Binary Code and the Binary Number System:

Binary Code can be defined as a way to represent information (i.e. text, computer instructions, images, or data in any other form) using a system made of two symbols, which are usually “0” and “1” from the binary number system.

The Binary Code was invented by Gottfried Leibniz in 1689 and appears in his article “Explanation of the binary arithmetic”.

In order to convert binary to text, you have two options: you can either use an online translator (like the one provided for free by ConvertBinary.com), or you can do it manually.

If you want to learn how to convert binary code to text manually, you can read this guide, or watch the associated tutorial.

There are many possible reasons for that: for example, you may want to send someone a message that is not immediately readable, or maybe a game that you are playing is challenging you with some binary code that you need to translate in order to move forward.

Also, you may find some random binary code on the internet, and then you want to know what it means.

Perhaps you need to practice binary conversion to get a better understanding of how computers use binary, or maybe you simply enjoy playing with nerdy stuff.

Absolutely! Computers use binary – the digits 0 and 1 – to store data. A binary digit, or bit , is the smallest unit of data in computing.

Computers use binary to represent everything (e.g., instructions, numbers, text, images, videos, sound, color, etc.) they need to store or execute.

Even if you write your program source code in a high-level language like Java or C#, which compile down to an intermediate language (bytecode and CIL, respectively), the required runtime environment that interprets and/or just-in-time compiles the intermediate language is a binary executable, and the operating system it runs on also consists of binary instructions.

Because their hardware architecture was easier to work with in the early days of electronic computing using only zeros and ones.

Every modern computer has a Central Processing Unit (CPU) which performs all arithmetic calculations and also performs logical operations.

The CPU is made out of millions of tiny components called transistors, which are basically switches. When the switch is ON it represents 1, and when it is OFF it represents 0.

Therefore all computer programs must be ultimately translated to binary code instructions which a computer CPU can interpret and execute.

Binary is important because of its implementation in modern computer hardware.

In the early days of computing, computers made use of analog components to store data and perform their calculations. But this wasn’t as accurate as binary code, because the analog method caused small errors that, compounding on each other, caused significant issues.

Binary numbers simplified the design of computers, since the transistors based on binary logic (where inputs and outputs can only be On, or Off) turned out to be cheaper and more simple to build, and also much more reliable to operate.

For a computer scientist, having an understanding of how computers store and manipulate information is essential.

If you do anything with hardware, boolean logic and binary become essentially intertwined and totally indispensable.

Most modern programming languages abstract the binary representation from the programmer, but the underlying code still gets translated to binary before it is sent to the machine for processing.

Modern computers use binary code to store and manipulate information, because their circuitry can easily read an On or Off charge, and transmit it very reliably too.

Furthermore, the logic of binary is easy to understand and can be used to build any type of logic gates.

There is no such thing as a “universal binary language”. Binary is a form of encoding information (i.e. characters) onto numbers, but that doesn’t change the contents of the information.

For this reason, if you encode a sentence written in English onto Binary, as you decode it back from binary to ASCII text it will still read in English, i.e. it won’t translate automatically to other languages.

Binary Code Goodies

Binary Clock

Try to read the time on this BCD clock: it represents time in binary code.

Binary Joke

There is a mathematical joke which is about binary code. It starts with “There are only 10 types of people in the world…“

A (binary) message for you

01001000 01100001 01110110 01100101 00100000 01100110 01110101 01101110 00100000 01110111 01101001 01110100 01101000 00100000 01000010 01101001 01101110 01100001 01110010 01111001 00100000 01000011 01101111 01100100 01100101 00100000 00111010 00101101 01000100 00001010 01010100 01100101 01101100 01101100 00100000 01111001 01101111 01110101 01110010 00100000 01100110 01110010 01101001 01100101 01101110 01100100 01110011 00100000 01100001 01100010 01101111 01110101 01110100 00100000 01000011 01101111 01101110 01110110 01100101 01110010 01110100 01000010 01101001 01101110 01100001 01110010 01111001 00101110 01100011 01101111 01101101 00001010 01000100 01101111 01101110 00100111 01110100 00100000 01100110 01101111 01110010 01100111 01100101 01110100 00100000 01110100 01101111 00100000 01010011 01101001 01100111 01101110 00100000 01010101 01110000 00100000 01100110 01101111 01110010 00100000 01101111 01110101 01110010 00100000 01001110 01100101 01110111 01110011 01101100 01100101 01110100 01110100 01100101 01110010 00100001

Translate this message with the Binary Translator